Auto Correct

Has the self-driving car at last arrived?

by Burkhard Bilger

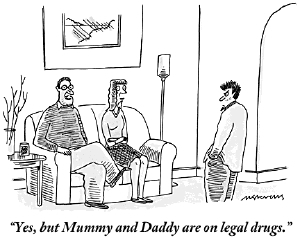

The Google car knows every turn. It never gets drowsy or distracted, or wonders who has the right-of-way. Illustration by Harry Campbell.

Human beings make terrible drivers. They talk on the phone and run red lights, signal to the left and turn to the right. They drink too much beer and plow into trees or veer into traffic as they swat at their kids. They have blind spots, leg cramps, seizures, and heart attacks. They rubberneck, hotdog, and take pity on turtles, cause fender benders, pileups, and head-on collisions. They nod off at the wheel, wrestle with maps, fiddle with knobs, have marital spats, take the curve too late, take the curve too hard, spill coffee in their laps, and flip over their cars. Of the ten million accidents that Americans are in every year, nine and a half million are their own damn fault.

A case in point: The driver in the lane to my right. He’s twisted halfway around in his seat, taking a picture of the Lexus that I’m riding in with an engineer named Anthony Levandowski. Both cars are heading south on Highway 880 in Oakland, going more than seventy miles an hour, yet the man takes his time. He holds his phone up to the window with both hands until the car is framed just so. Then he snaps the picture, checks it onscreen, and taps out a lengthy text message with his thumbs. By the time he puts his hands back on the wheel and glances up at the road, half a minute has passed.

Levandowski shakes his head. He’s used to this sort of thing. His Lexus is what you might call a custom model. It’s surmounted by a spinning laser turret and knobbed with cameras, radar, antennas, and G.P.S. It looks a little like an ice-cream truck, lightly weaponized for inner-city work. Levandowski used to tell people that the car was designed to chase tornadoes or to track mosquitoes, or that he belonged to an élite team of ghost hunters. But nowadays the vehicle is clearly marked: “Self-Driving Car.”

Every week for the past year and a half, Levandowski has taken the Lexus on the same slightly surreal commute. He leaves his house in Berkeley at around eight o’clock, waves goodbye to his fiancée and their son, and drives to his office in Mountain View, forty-three miles away. The ride takes him over surface streets and freeways, old salt flats and pine-green foothills, across the gusty blue of San Francisco Bay, and down into the heart of Silicon Valley. In rush-hour traffic, it can take two hours, but Levandowski doesn’t mind. He thinks of it as research. While other drivers are gawking at him, he is observing them: recording their maneuvers in his car’s sensor logs, analyzing traffic flow, and flagging any problems for future review. The only tiresome part is when there’s roadwork or an accident ahead and the Lexus insists that he take the wheel. A chime sounds, pleasant yet insistent, then a warning appears on his dashboard screen: “In one mile, prepare to resume manual control.”

Levandowski is an engineer at Google X, the company’s semi-secret lab for experimental technology. He turned thirty-three last March but still has the spindly build and nerdy good nature of the kids in my high-school science club. He wears black frame glasses and oversized neon sneakers, has a long, loping stride—he’s six feet seven—and is given to excitable talk on fantastical themes. Cybernetic dolphins! Self-harvesting farms! Like a lot of his colleagues in Mountain View, Levandowski is equal parts idealist and voracious capitalist. He wants to fix the world and make a fortune doing it. He comes by these impulses honestly: his mother is a French diplomat, his father an American businessman. Although Levandowski spent most of his childhood in Brussels, his English has no accent aside from a certain absence of inflection—the bright, electric chatter of a processor in overdrive. “My fiancée is a dancer in her soul,” he told me. “I’m a robot.”

What separates Levandowski from the nerds I knew is this: his wacky ideas tend to come true. “I only do cool shit,” he says. As a freshman at Berkeley, he launched an intranet service out of his basement that earned him fifty thousand dollars a year. As a sophomore, he won a national robotics competition with a machine made out of Legos that could sort Monopoly money—a fair analogy for what he’s been doing for Google lately. He was one of the principal architects of Street View and the Google Maps database, but those were just warmups. “The Wright Brothers era is over,” Levandowski assured me, as the Lexus took us across the Dumbarton Bridge. “This is more like Charles Lindbergh’s plane. And we’re trying to make it as robust and reliable as a 747.”

Not everyone finds this prospect appealing. As a commercial for the Dodge Charger put it two years ago, “Hands-free driving, cars that park themselves, an unmanned car driven by a search-engine company? We’ve seen that movie. It ends with robots harvesting our bodies for energy.” Levandowski understands the sentiment. He just has more faith in robots than most of us do. “People think that we’re going to pry the steering wheel from their cold, dead hands,” he told me, but they have it exactly wrong. Someday soon, he believes, a self-driving car will save your life.

The Google car is an old-fashioned sort of science fiction: this year’s model of last century’s make. It belongs to the gleaming, chrome-plated age of jet packs and rocket ships, transporter beams and cities beneath the sea, of a predicted future still well beyond our technology. In 1939, at the World’s Fair in New York, visitors stood in lines up to two miles long to see the General Motors Futurama exhibit. Inside, a conveyor belt carried them high above a miniature landscape, spread out beneath a glass dome. Its suburbs and skyscrapers were laced together by superhighways full of radio-guided cars. “Does it seem strange? Unbelievable?” the announcer asked. “Remember, this is the world of 1960.”

Not quite. Skyscrapers and superhighways made the deadline, but driverless cars still putter along in prototype. Human beings, as it turns out, aren’t easy to improve upon. For every accident they cause, they avoid a thousand others. They can weave through tight traffic and anticipate danger, gauge distance, direction, pace, and momentum. Americans drive nearly three trillion miles a year, I was told by Ron Medford, a former deputy administrator of the National Highway Traffic Safety Administration who now works for Google. It’s no wonder that we have thirty-two thousand fatalities along the way, he said. It’s a wonder the number is so low.

Levandowski keeps a collection of vintage illustrations and newsreels on his laptop, just to remind him of all the failed schemes and fizzled technologies of the past. When he showed them to me one night at his house, his face wore a crooked grin, like a father watching his son strike out in Little League. From 1957: A sedan cruises down a highway, guided by circuits in the road, while a family plays dominoes inside. “No traffic jam . . . no collisions . . . no driver fatigue.” From 1977: Engineers huddle around a driverless Ford on a test track. “Cars like this one may be on the nation’s roads by the year 2000!” Levandowski shook his head. “We didn’t come up with this idea,” he said. “We just got lucky that the computers and sensors were ready for us.”

Almost from the beginning, the field divided into two rival camps: smart roads and smart cars. General Motors pioneered the first approach in the late nineteen-fifties. Its Firebird III concept car—shaped like a jet fighter, with titanium tail fins and a glass-bubble cockpit—was designed to run on a test track embedded with an electrical cable, like the slot on a toy speedway. As the car passed over the cable, a receiver in its front end picked up a radio signal and followed it around the curve. Engineers at Berkeley later went a step further: they spiked the track with magnets, alternating their polarity in binary patterns to send messages to the car—“Slow down, sharp curve ahead.” Systems like these were fairly simple and reliable, but they had a chicken-and-egg problem. To be useful, they had to be built on a large scale; to be built on a large scale, they had to be useful. “We don’t have the money to fix potholes,” Levandowski says. “Why would we invest in putting wires in the road?”

Smart cars were more flexible but also more complex. They needed sensors to guide them, computers to steer them, digital maps to follow. In the nineteen-eighties, a German engineer named Ernst Dickmanns, at the Bundeswehr University in Munich, equipped a Mercedes van with video cameras and processors, then programmed it to follow lane lines. Soon it was steering itself around a track. By 1995, Dickmanns’s car was able to drive on the Autobahn from Munich to Odense, Denmark, going up to a hundred miles at a stretch without assistance. Surely the driverless age was at hand! Not yet. Smart cars were just clever enough to get drivers into trouble. The highways and test tracks they navigated were strictly controlled environments. The instant more variables were added—a pedestrian, say, or a traffic cop—their programming faltered. Ninety-eight per cent of driving is just following the dotted line. It’s the other two per cent that matters.

“There was no way, before 2000, to make something interesting,” the roboticist Sebastian Thrun told me. “The sensors weren’t there, the computers weren’t there, and the mapping wasn’t there. Radar was a device on a hilltop that cost two hundred million dollars. It wasn’t something you could buy at Radio Shack.” Thrun, who is forty-six, is the founder of the Google Car project. A wunderkind from the west German city of Solingen, he programmed his first driving simulator at the age of twelve. Slender and tan, with clear blue eyes and a smooth, seemingly boneless gait, he looks as if he just stepped off a dance floor in Ibiza. And yet, like Levandowski, he has a gift for seeing things through a machine’s eyes—for intuiting the logic by which it might apprehend the world.

When Thrun first arrived in the United States, in 1995, he took a job at the country’s leading center for driverless-car research: Carnegie Mellon University. He went on to build robots that explored mines in Virginia, guided visitors through the Smithsonian, and chatted with patients at a nursing home. What he didn’t build was driverless cars. Funding for private research in the field had dried up by then. And though Congress had set a goal that a third of all ground combat vehicles be autonomous by 2015, little had come of the effort. Every so often, Thrun recalls, military contractors, funded by the Defense Advanced Research Projects Agency, would roll out their latest prototype. “The demonstrations I saw mostly ended in crashes and breakdowns in the first half mile,” he told me. “DARPA was funding people who weren’t solving the problem. But they couldn’t tell if it was the technology or the people. So they did this crazy thing, which was really visionary.”

They held a race.

The first DARPA Grand Challenge took place in the Mojave Desert on March 13, 2004. It offered a million-dollar prize for what seemed like a simple task: build a car that can drive a hundred and forty-two miles without human intervention. Ernst Dickmanns’s car had gone similar distances on the Autobahn, but always with a driver in the seat to take over in the tricky stretches. The cars in the Grand Challenge would be empty, and the road would be rough: from Barstow, California, to Primm, Nevada. Instead of smooth curves and long straightaways, it had rocky climbs and hairpin turns; instead of road signs and lane lines, G.P.S. waypoints. “Today, we could do it in a few hours,” Thrun told me. “But at the time it felt like going to the moon in sneakers.”

Levandowski first heard about it from his mother. She’d seen a notice for the race when it was announced online, in 2002, and recalled that her son used to play with remote-control cars as a boy, crashing them into things on his bedroom floor. Was this so different? Levandowski was now a student at Berkeley, in the industrial-engineering department. When he wasn’t studying or rowing crew or winning Lego competitions, he was casting about for cool new shit to build—for a profit, if possible. “If he’s making money, it’s his confirmation that he’s creating value,” his friend Randy Miller told me. “I remember, when we were in college, we were at his house one day, and he told me that he’d rented out his bedroom. He’d put up a wall in his living room and was sleeping on a couch in one half, next to a big server tower that he’d built. I said, ‘Anthony, what the hell are you doing? You’ve got plenty of money. Why don’t you get your own place?’ And he said, ‘No. Until I can move to a stateroom on a 747, I want to live like this.’ ”

DARPA’s rules were vague on the subject of vehicles: anything that could drive itself would do. So Levandowski made a bold decision. He would build the world’s first autonomous motorcycle. This seemed like a stroke of genius at the time. (Miller says that it came to them in a hot tub in Tahoe, which sounds about right.) Good engineering is all about gaming the system, Levandowski says—about sidestepping obstacles rather than trying to run over them. His favorite example is from a robotics contest at M.I.T. in 1991. Tasked with building a machine that could shoot the most Ping-Pong balls into a tube, the students came up with dozens of ingenious contraptions. The winner, though, was infuriatingly simple: it had a mechanical arm reach over, drop a ball into the tube, then cover it up so that no others could get in. It won the contest in a single move. The motorcycle could be like that, Levandowski thought: quicker off the mark than a car and more maneuverable. It could slip through tighter barriers and drive just as fast. Also, it was a good way to get back at his mother, who’d never let him ride motorcycles as a kid. “Fine,” he thought. “I’ll just make one that rides itself.”

The flaw in this plan was obvious: a motorcycle can’t stand up on its own. It needs a rider to balance it—or else a complex, computer-controlled system of shafts and motors to adjust its position every hundredth of a second. “Before you can drive ten feet you have to do a year of engineering,” Levandowski says. The other racers had no such problem. They also had substantial academic and corporate backing: the Carnegie Mellon team was working with General Motors, Caltech with Northrop Grumman, Ohio State with Oshkosh trucking. When Levandowski went to the Berkeley faculty with his idea, the reaction was, at best, bemused disbelief. His adviser, Ken Goldberg, told him frankly that he had no chance of winning. “Anthony is probably the most creative undergraduate I’ve encountered in twenty years,” he told me. “But this was a very great stretch.”

Levandowski was unfazed. Over the next two years, he made more than two hundred cold calls to potential sponsors. He gradually scraped together thirty thousand dollars from Raytheon, Advanced Micro Devices, and others. (No motorcycle company was willing to put its name on the project.) Then he added a hundred thousand dollars of his own. In the meantime, he went about poaching the faculty’s graduate students. “He paid us in burritos,” Charles Smart, now a professor of mathematics at M.I.T., told me. “Always the same burritos. But I remember thinking, I hope he likes me and lets me work on this.” Levandowski had that effect on people. His mad enthusiasm for the project was matched only by his technical grasp of its challenges—and his willingness to go to any lengths to meet them. At one point, he offered Smart’s girlfriend and future wife five thousand dollars to break up with him until the project was done. “He was fairly serious,” Smart told me. “She hated the motorcycle project.”

There came a day when Goldberg realized that half his Ph.D. students had been working for Levandowski. They’d begun with a Yamaha dirt bike, made for a child, and stripped it down to its skeleton. They added cameras, gyros, G.P.S. modules, computers, roll bars, and an electric motor to turn the wheel. They wrote tens of thousands of lines of code. The videos of their early test runs, edited together, play like a jittery reel from “The Benny Hill Show”: bike takes off, engineers jump up and down, bike falls over—more than six hundred times in a row. “We built the bike and rebuilt the bike, just sort of groping in the dark,” Smart told me. “It’s like one of my colleagues once said: ‘You don’t understand, Charlie, this is robotics. Nothing actually works.’ ”

Finally, a year into the project, a Russian engineer named Alex Krasnov cracked the code. They’d thought that stability was a complex, nonlinear problem, but it turned out to be fairly simple. When the bike tipped to one side, Krasnov had it steer ever so slightly in the same direction. This created centrifugal acceleration that pulled the bike upright again. By doing this over and over, tracing tiny S-curves as it went, the motorcycle could hold to a straight line. On the video clip from that day, the bike wobbles a little at first, like a baby giraffe finding its legs, then suddenly, confidently circles the field—as if guided by an invisible hand. They called it the Ghost Rider.

The Grand Challenge proved to be one of the more humbling events in automotive history. Its sole consolation lay in shared misery. None of the fifteen finalists made it past the first ten miles; seven broke down within a mile. Ohio State’s six-wheel, thirty-thousand-pound TerraMax was brought up short by some bushes; Caltech’s Chevy Tahoe crashed into a fence. Even the winner, Carnegie Mellon, earned at best a Pyrrhic victory. Its robotic Humvee, Sandstorm, drove just seven and a half miles before careering off course. A helicopter later found it beached on an embankment, wreathed in smoke, its back wheels spinning so furiously that they’d burst into flame.

As for the Ghost Rider, it managed to beat out more than ninety cars in the qualifying round—a mile-and-a-half obstacle course on the California Speedway in Fontana. But that was its high-water mark. On the day of the Grand Challenge, standing at the starting line in Barstow, half delirious with adrenaline and fatigue, Levandowski forgot to turn on the stability program. When the gun went off, the bike sputtered forward, rolled three feet, and fell over.

“That was a dark day,” Levandowski says. It took him a while to get over it—at least by his hyperactive standards. “I think I took, like, four days off,” he told me. “And then I was like, Hey, I’m not done yet! I need to go fix this!” DARPA apparently had the same thought. Three months later, the agency announced a second Grand Challenge for the following October, doubling the prize money to two million dollars. To win, the teams would have to address a daunting list of failures and shortcomings, from fried hard drives to faulty satellite equipment. But the underlying issue was always the same: as Joshua Davis later wrote in Wired, the robots just weren’t smart enough. In the wrong light, they couldn’t tell a bush from a boulder, a shadow from a solid object. They reduced the world to a giant marble maze, then got caught in the thickets between holes. They needed to raise their I.Q.

In the early nineties, Dean Pomerleau, a roboticist at Carnegie Mellon, had hit upon an unusually efficient way to do this: he let his car teach itself. Pomerleau equipped the computer in his minivan with artificial neural networks, modelled on those in the brain. As he drove around Pittsburgh, they kept track of his driving decisions, gathering statistics and formulating their own rules of the road. “When we started, the car was going about two to four miles an hour along a path through a park—you could ride a tricycle faster,” Pomerleau told me. “By the end, it was going fifty-five miles per hour on highways.” In 1996, the car steered itself from Washington, D.C., to San Diego with only minimal intervention—nearly four times as far as Ernst Dickmanns’s cars had gone a year earlier. “No Hands Across America,” Pomerleau called it.

Machine learning is an idea nearly as old as computer science—Alan Turing, one of the fathers of the field, considered it the essence of artificial intelligence. It’s often the fastest way for a computer to learn a complex behavior, but it has its drawbacks. A self-taught car can come to some strange conclusions. It may confuse the shadow of a tree for the edge of the road, or reflected headlights for lane markers. It may decide that a bag floating across a road is a solid object and swerve to avoid it. It’s like a baby in a stroller, deducing the world from the faces and storefronts that flicker by. It’s hard to know what it knows. “Neural networks are like black boxes,” Pomerleau says. “That makes people nervous, particularly when they’re controlling a two-ton vehicle.”

Computers, like children, are more often taught by rote. They’re given thousands of rules and bits of data to memorize—If X happens, do Y; avoid big rocks—then sent out to test them by trial and error. This is slow, painstaking work, but it’s easier to predict and refine than machine learning. The trick, as in any educational system, is to combine the two in proper measure. Too much rote learning can make for a plodding machine. Too much experiential learning can make for blind spots and caprice. The roughest roads in the Grand Challenge were often the easiest to navigate, because they had clear paths and well-defined shoulders. It was on the open, sandy trails that the cars tended to go crazy. “Put too much intelligence into a car and it becomes creative,” Sebastian Thrun told me.

The second Grand Challenge put these two approaches to the test. Nearly two hundred teams signed up for the race, but the top contenders were clear from the start: Carnegie Mellon and Stanford. The C.M.U. team was led by the legendary roboticist William (Red) Whittaker. (Pomerleau had left the university by then to start his own firm.) A burly, mortar-headed ex-marine, Whittaker specialized in machines for remote and dangerous locations. His robots had crawled over Antarctic ice fields and active volcanoes, and inspected the damaged nuclear reactors at Three Mile Island and Chernobyl. Seconded by a brilliant young engineer named Chris Urmson, Whittaker approached the race as a military operation, best won by overwhelming force. His team spent twenty-eight days laser-scanning the Mojave to create a computer model of its topography; then they combined those scans with satellite data to help identify obstacles. “People don’t count those who died trying,” he later told me.

The Stanford team was led by Thrun. He hadn’t taken part in the first race, when he was still just a junior faculty member at C.M.U. But by the following summer he had accepted an endowed professorship in Palo Alto. When DARPA announced the second race, he heard about it from one of his Ph.D. students, Mike Montemerlo. “His assessment of whether we should do it was no, but his body and his eyes and everything about him said yes,” Thrun recalls. “So he dragged me into it.” The contest would be a study in opposites: Thrun the suave cosmopolitan; Whittaker the blustering field marshal. Carnegie Mellon with its two military vehicles, Sandstorm and Highlander; Stanford with its puny Volkswagen Touareg, nicknamed Stanley.

It was an even match. Both teams used similar sensors and software, but Thrun and Montemerlo concentrated more heavily on machine learning. “It was our secret weapon,” Thrun told me. Rather than program the car with models of the rocks and bushes it should avoid, Thrun and Montemerlo simply drove it down the middle of a desert road. The lasers on the roof scanned the area around the car, while the camera looked farther ahead. By analyzing this data, the computer learned to identify the flat parts as road and the bumpy parts as shoulders. It also compared its camera images with its laser scans, so that it could tell what flat terrain looked like from a distance—and therefore drive a lot faster. “Every day it was the same,” Thrun recalls. “We would go out, drive for twenty minutes, realize there was some software bug, then sit there for four hours reprogramming and try again. We did that for four months.” When they started, one out of every eight pixels that the computer labelled as an obstacle was nothing of the sort. By the time they were done, the error rate had dropped to one in fifty thousand.

On the day of the race, two hours before start time, DARPA sent out the G.P.S. coördinates for the course. It was even harder than the first time: more turns, narrower lanes, three tunnels, and a mountain pass. Carnegie Mellon, with two cars to Stanford’s one, decided to play it safe. They had Highlander run at a fast clip—more than twenty miles an hour on average—while Sandstorm hung back a little. The difference was enough to cost them the race. When Highlander began to lose power because of a pinched fuel line, Stanley moved ahead. By the time it crossed the finish line, six hours and fifty-three minutes after it started, it was more than ten minutes ahead of Sandstorm and more than twenty minutes ahead of Highlander.

It was a triumph of the underdog, of brain over brawn. But less for Stanford than for the field as a whole. Five cars finished the hundred-and-thirty-two-mile course; more than twenty cars went farther than the winner had in 2004. In one year, they’d made more progress than DARPA’s contractors had in twenty. “You had these crazy people who didn’t know how hard it was,” Thrun told me. “They said, ‘Look, I have a car, I have a computer, and I need a million bucks.’ So they were doing things in their home shops, putting something together that had never been done in robotics before, and some were insanely impressive.” A team of students from Palos Verdes High School in California, led by a seventeen-year-old named Chris Seide, built a self-driving “Doom Buggy” that, Thrun recalls, could change lanes and stop at stop signs. A Ford S.U.V. programmed by some insurance-company employees from Louisiana finished just thirty-seven minutes behind Stanley. Their lead programmer had lifted his preliminary algorithms from textbooks on video-game design.

“When you look back at that first Grand Challenge, we were in the Stone Age compared to where we are now,” Levandowski told me. His motorcycle embodied that evolution. Although it never made it out of the semifinals of the second race—tripped up by some wooden boards—the Ghost Rider had become, in its way, a marvel of engineering, beating out seventy-eight four-wheeled competitors. Two years later, the Smithsonian added the motorcycle to its collection; a year after that, it added Stanley as well. By then, Thrun and Levandowski were both working for Google.

The driverless-car project occupies a lofty, garagelike space in suburban Mountain View. It’s part of a sprawling campus built by Silicon Graphics in the early nineties and repurposed by Google, the conquering army, a decade later. Like a lot of high-tech offices, it’s a mixture of the whimsical and the workaholic—candy-colored sheet metal over a sprung-steel chassis. There’s a Foosball table in the lobby, exercise balls in the sitting room, and a row of what look like clown bicycles parked out front, free for the taking. When you walk in, the first things you notice are the wacky tchotchkes on the desks: Smurfs, “Star Wars” toys, Rube Goldberg devices. The next things you notice are the desks: row after row after row, each with someone staring hard at a screen.

It had taken me two years to gain access to this place, and then only with a staff member shadowing my every step. Google guards its secrets more jealously than most. At the gourmet cafeterias that dot the campus, signs warn against “tailgaters”—corporate spies who might slink in behind an employee before the door swings shut. Once inside, though, the atmosphere shifts from vigilance to an almost missionary zeal. “We want to fundamentally change the world with this,” Sergey Brin, the co-founder of Google, told me.

Brin was dressed in a charcoal hoodie, baggy pants, and sneakers. His scruffy beard and flat, piercing gaze gave him a Rasputinish quality, dulled somewhat by his Google Glass eyewear. At one point, he asked if I’d like to try the glasses on. When I’d positioned the miniature projector in front of my right eye, a single line of text floated poignantly into view: “3:51 P.M. It’s okay.”

“As you look outside, and walk through parking lots and past multilane roads, the transportation infrastructure dominates,” Brin said. “It’s a huge tax on the land.” Most cars are used only for an hour or two a day, he said. The rest of the time, they’re parked on the street or in driveways and garages. But if cars could drive themselves, there would be no need for most people to own them. A fleet of vehicles could operate as a personalized public-transportation system, picking people up and dropping them off independently, waiting at parking lots between calls. They’d be cheaper and more efficient than taxis—by some calculations, they’d use half the fuel and a fifth the road space of ordinary cars—and far more flexible than buses or subways. Streets would clear, highways shrink, parking lots turn to parkland. “We’re not trying to fit into an existing business model,” Brin said. “We are just on such a different planet.”

When Thrun and Levandowski first came to Google, in 2007, they were given a simpler task: to create a virtual map of the country. The idea came from Larry Page, the company’s other co-founder. Five years earlier, Page had strapped a video camera on his car and taken several hours of footage around the Bay Area. He’d then sent it to Marc Levoy, a computer-graphics expert at Stanford, who created a program that could paste such footage together to show an entire streetscape. Google engineers went on to jury-rig some vans with G.P.S. and rooftop cameras that could shoot in every direction. Eventually, they were able to launch a system that could show three-hundred-and-sixty-degree panoramas for any address. But the equipment was unreliable. When Thrun and Levandowski came on board, they helped the team retool and reprogram. Then they equipped a hundred cars and sent them all over the United States.

Google Street View has since spread to more than a hundred countries. It’s both a practical tool and a kind of magic trick—a spyglass onto distant worlds. To Levandowski, though, it was just a start. The same data, he argued, could be used to make digital maps more accurate than those based on G.P.S. data, which Google had been leasing from companies like NAVTEQ. The street and exit names could be drawn straight from photographs, for instance, rather than faulty government records. This sounded simple enough but proved to be fiendishly complicated. Street View mostly covered urban areas, but Google Maps had to be comprehensive: every logging road logged on a computer, every gravel drive driven down. Over the next two years, Levandowski shuttled back and forth to Hyderabad, India, to train more than two thousand data processors to create new maps and fix old ones. When Apple’s new mapping software failed so spectacularly a year ago, he knew exactly why. By then, his team had spent five years entering several million corrections a day.

Street View and Maps were logical extensions of a Google search. They showed you where to locate the things you’d found. What was missing was a way to get there. Thrun, despite his victory in the second Grand Challenge, didn’t think that driverless cars could work on surface streets—there were just too many variables. “I would have told you then that there is no way on earth we can drive safely,” he says. “All of us were in denial that this could be done.” Then, in February of 2008, Levandowski got a call from a producer of “Prototype This!,” a series on the Discovery Channel. Would he be interested in building a self-driving pizza delivery car? Within five weeks, he and a team of fellow Berkeley graduates and other engineers had retrofitted a Prius for the purpose. They patched together a guidance system and persuaded the California Highway Patrol to let the car cross the Bay Bridge—from San Francisco to Treasure Island. It would be the first time an unmanned car had driven legally on American streets.

On the day of the filming, the city looked as if it were under martial law. The lower level of the bridge was closed to regular traffic, and eight police cruisers and eight motorcycle cops were assigned to accompany the Prius over it. “Obama was there the week before and he had a smaller escort,” Levandowski recalls. The car made its way through downtown and crossed the bridge in fine form, only to wedge itself against a concrete wall on the far side. Still, it gave Google the nudge that it needed. Within a few months, Page and Brin had called Thrun to green-light a driverless-car project. “They didn’t even talk about budget,” Thrun says. “They just asked how many people I needed and how to find them. I said, ‘I know exactly who they are.’ ”

Every Monday at eleven-thirty, the lead engineers for the Google car project meet for a status update. They mostly cleave to a familiar Silicon Valley demographic—white, male, thirty to forty years old—but they come from all over the world. I counted members from Belgium, Holland, Canada, New Zealand, France, Germany, China, and Russia at one sitting. Thrun began by cherry-picking the top talent from the Grand Challenges: Chris Urmson was hired to develop the software, Levandowski the hardware, Mike Montemerlo the digital maps. (Urmson now directs the project, while Thrun has shifted his attention to Udacity, an online education company that he co-founded two years ago.) Then they branched out to prodigies of other sorts: lawyers, laser designers, interface gurus—anyone, at first, except automotive engineers. “We hired a new breed,” Thrun told me. People at Google X had a habit of saying that So-and-So on the team was the smartest person they’d ever met, till the virtuous circle closed and almost everyone had been singled out by someone else. As Levandowski said of Thrun, “He thinks at a hundred miles an hour. I like to think at ninety.”

When I walked in one morning, the team was slouched around a conference table in T-shirts and jeans, discussing the difference between the Gregorian and the Julian calendar. The subtext, as usual, was time. Google’s goal isn’t to create a glorified concept car—a flashy idea that will never make it to the street—but a polished commercial product. That means real deadlines and continual tests and redesigns. The main topic for much of that morning was the user interface. How aggressive should the warning sounds be? How many pedestrians should the screen show? In one version, a jaywalker appeared as a red dot outlined in white. “I really don’t like that,” Urmson said. “It looks like a real-estate sign.” The Dutch designer nodded and promised an alternative for the next round. Every week, several dozen Google volunteers test-drive the cars and fill out user surveys. “In God we trust,” the company faithful like to say. “Everyone else, bring data.”

In the beginning, Brin and Page presented Thrun’s team with a series of DARPA-like challenges. They managed the first in less than a year: to drive a hundred thousand miles on public roads. Then the stakes went up. Like boys plotting a scavenger hunt, Brin and Page pieced together ten itineraries of a hundred miles each. The roads wound through every part of the Bay Area—from the leafy lanes of Menlo Park to the switchbacks of Lombard Street. If the driver took the wheel or tapped the brakes even once, the trip was disqualified. “I remember thinking, How can you possibly do that?” Urmson told me. “It’s hard to game driving through the middle of San Francisco.”

They started the project with Levandowski’s pizza car and Stanford’s open-source software. But they soon found that they had to rebuild from scratch: the car’s sensors were already outdated, the software just glitchy enough to be useless. The DARPA cars hadn’t concerned themselves with passenger comfort. They just went from point A to point B as efficiently as possible. To smooth out the ride, Thrun and Urmson had to make a deep study of the physics of driving. How does the plane of a road change as it goes around a curve? How do tire drag and deformation affect steering? Braking for a light seems simple enough, but good drivers don’t apply steady pressure, as a computer might. They build it gradually, hold it for a moment, then back off again.

For complicated moves like that, Thrun’s team often started with machine learning, then reinforced it with rule-based programming—a superego to control the id. They had the car teach itself to read street signs, for instance, but they underscored that knowledge with specific instructions: “STOP” means stop. If the car still had trouble, they’d download the sensor data, replay it on the computer, and fine-tune the response. Other times, they’d run simulations based on accidents documented by the National Highway Traffic Safety Administration. A mattress falls from the back of a truck. Should the car swerve to avoid it or plow ahead? How much advance warning does it need? What if a cat runs into the road? A deer? A child? These were moral questions as well as mechanical ones, and engineers had never had to answer them before. The DARPA cars didn’t even bother to distinguish between road signs and pedestrians—or “organics,” as engineers sometimes call them. They still thought like machines.

Four-way stops were a good example. Most drivers don’t just sit and wait their turn. They nose into the intersection, nudging ahead while the previous car is still passing through. The Google car didn’t do that. Being a law-abiding robot, it waited until the crossing was completely clear—and promptly lost its place in line. “The nudging is a kind of communication,” Thrun told me. “It tells people that it’s your turn. The same thing with lane changes: if you start to pull into a gap and the driver in that lane moves forward, he’s giving you a clear no. If he pulls back, it’s a yes. The car has to learn that language.”

It took the team a year and a half to master Page and Brin’s ten hundred-mile road trips. The first one ran from Monterey to Cambria, along the cliffs of Highway 1. “I was in the back seat, screaming like a little girl,” Levandowski told me. One of the last started in Mountain View, went east across the Dumbarton Bridge to Union City, back west across the bay to San Mateo, north on 101, east over the Bay Bridge to Oakland, north through Berkeley and Richmond, back west across the bay to San Rafael, south to the mazy streets of the Tiburon Peninsula, so narrow that they had to tuck in the side mirrors, and over the Golden Gate Bridge to downtown San Francisco. When they finally arrived, past midnight, they celebrated with a bottle of champagne. Now they just had to design a system that could do the same thing in any city, in all kinds of weather, with no chance of a do-over. Really, they’d just begun.

These days, Levandowski and the other engineers divide their time between two models: the Prius, which is used to test new sensors and software; and the Lexus, which offers a more refined but limited ride. (The Prius can drive on surface streets; the Lexus only on highways.) As the cars have evolved, they’ve sprouted appendages and lost them again, like vat-grown creatures in a science-fiction movie. The cameras and radar are now tucked behind sheet metal and glass, the laser turret reduced from a highway cone to a sand pail. Everything is smaller, sleeker, and more powerful than before, but there’s still no mistaking the cars. When Levandowski picked me up or dropped me off near the Berkeley campus on his commute, students would look up from their laptops and squeal, then run over to take snapshots of the car with their phones. It was their version of the Oscar Mayer Wienermobile.

Still, my first thought on settling into the Lexus was how ordinary things looked. Google’s experiments had left no scars, no signs of cybernetic alteration. The interior could have passed for that of any luxury car: burl-wood and leather, brushed metal and Bose speakers. There was a screen in the center of the dashboard for digital maps; another above it for messages from the computer. The steering wheel had an On button to the left and an Off button to the right, lit a soft, fibre-optic green and red. But there was nothing to betray their exotic purpose. The only jarring element was the big red knob between the seats. “That’s the master kill switch,” Levandowski said. “We’ve never actually used it.”

Levandowski kept a laptop open beside him as we rode. Its screen showed a graphic view of the data flowing in from the sensors: a Tron-like world of neon objects drifting and darting on a wireframe nightscape. Each sensor offered a different perspective on the world. The laser provided three-dimensional depth: its sixty-four beams spun around ten times per second, scanning 1.3 million points in concentric waves that began eight feet from the car. It could spot a fourteen-inch object a hundred and sixty feet away. The radar had twice that range but nowhere near the precision. The camera was good at identifying road signs, turn signals, colors, and lights. All three views were combined and color-coded by a computer in the trunk, then overlaid by the digital maps and Street Views that Google had already collected. The result was a road atlas like no other: a simulacrum of the world.

I was thinking about all this as the Lexus headed south from Berkeley down Highway 24. What I wasn’t thinking about was my safety. At first, it was a little alarming to see the steering wheel turn by itself, but that soon passed. The car clearly knew what it was doing. When the driver beside us drifted into our lane, the Lexus drifted the other way, keeping its distance. When the driver ahead hit his brakes, the Lexus was already slowing down. Its sensors could see so far in every direction that it saw traffic patterns long before we did. The effect was almost courtly: drawing back to let others pass, gliding into gaps, keeping pace without strain, like a dancer in a quadrille.

The Prius was an even more capable car, but also a rougher ride. When I rode in it with Dmitri Dolgov, the team’s lead programmer, it had an occasional lapse in judgment: tailgating a truck as it came down an exit ramp; rushing late through a yellow light. In those cases, Dolgov made a note on his laptop. By that night, he’d have adjusted the algorithm and run simulations till the computer got it right.

The Google car has now driven more than half a million miles without causing an accident—about twice as far as the average American driver goes before crashing. Of course, the computer has always had a human driver to take over in tight spots. Left to its own devices, Thrun says, it could go only about fifty thousand miles on freeways without a major mistake. Google calls this the dog-food stage: not quite fit for human consumption. “The risk is too high,” Thrun says. “You would never accept it.” The car has trouble in the rain, for instance, when its lasers bounce off shiny surfaces. (The first drops call forth a small icon of a cloud onscreen and a voice warning that auto-drive will soon disengage.) It can’t tell wet concrete from dry or fresh asphalt from firm. It can’t hear a traffic cop’s whistle or follow hand signals.

And yet, for each of its failings, the car has a corresponding strength. It never gets drowsy or distracted, never wonders who has the right-of-way. It knows every turn, tree, and streetlight ahead in precise, three-dimensional detail. Dolgov was riding through a wooded area one night when the car suddenly slowed to a crawl. “I was thinking, What the hell? It must be a bug,” he told me. “Then we noticed the deer walking along the shoulder.” The car, unlike its riders, could see in the dark. Within a year, Thrun added, it should be safe for a hundred thousand miles.

The real question is who will build it. Google is a software firm, not a car company. It would rather sell its programs and sensors to Ford or GM than build its own cars. The companies could then repackage the system as their own, as they do with G.P.S. units from NAVTEQ or TomTom. The difference is that the car companies have never bothered to make their own maps, but they’ve spent decades working on driverless cars. General Motors sponsored Carnegie Mellon’s DARPA races and has a large testing facility for driverless cars outside of Detroit. Toyota opened a nine-acre laboratory and “simulated urban environment” for self-driving cars last November, at the foot of Mt. Fuji. But aside from Nissan, which recently announced that it would sell fully autonomous cars by 2020, the manufacturers are much more pessimistic about the technology. “It’ll happen, but it’s a long way out,” John Capp, General Motors’ director of electrical, controls, and active safety research, told me. “It’s one thing to do a demonstration—‘Look, Ma, no hands!’ But I’m talking about real production variance and systems we’re confident in. Not some circus vehicle.”

When I went to visit the most recent International Auto Show in New York, the exhibits were notably silent about autonomous driving. That’s not to say that it wasn’t on display. Outside the convention center, Jeep had set up an obstacle course for its new Wrangler, including a row of logs to drive over and a miniature hill to climb. When I went down the hill with a Jeep sales rep, he kept telling me to take my foot off the brake. The car was equipped with “descent control,” he explained, but, like the other exhibitors, he avoided terms like “self-driving.” “We don’t even include it in our vocabulary,” Alan Hall, a communications manager at Ford, told me. “Our view of the future is that the driver remains in control of the vehicle. He is the captain of the ship.”

This was a little disingenuous—necessity passing as principle. The car companies can’t do full autonomy yet, so they do it piece by piece. Every decade or so, they introduce another bit of automation, another task gently lifted from the captain’s hands: power steering in the nineteen-fifties, cruise control as a standard feature in the seventies, antilock brakes in the eighties, electronic stability control in the nineties, the first self-parking cars in the two-thousands. The latest models can detect lane lines and steer themselves to stay within them. They can keep a steady distance from the car ahead, braking to a stop if necessary. They have night vision, blind-spot detection, and stereo cameras that can identify pedestrians. Yet the over-all approach hasn’t changed. As Levandowski puts it, “They want to make cars that make drivers better. We want to make cars that are better than drivers.”

Along with Nissan, Toyota and Mercedes are probably closest to developing systems like Google’s. Yet they hesitate to introduce them for different reasons. Toyota’s customers are a conservative bunch, less concerned with style than with comfort. “They tend to have a fairly long adoption curve,” Jim Pisz, the corporate manager of Toyota’s North American business strategy, told me. “It was only five years ago that we eliminated cassette players.” The company has been too far ahead of the curve before. In 2005, when Toyota introduced the world’s first self-parking car, it was finicky and slow to maneuver, as well as expensive. “We need to build incremental levels of trust,” Pisz said.

Mercedes has a knottier problem. It has a reputation for fancy electronics and a long history of innovation. Its newest experimental car can maneuver in traffic, drive on surface streets, and track obstacles with cameras and radar much as Google’s do. But Mercedes builds cars for people who love to drive, and who pay a stiff premium for the privilege. Taking the steering wheel out of their hands would seem to defeat the purpose—as would sticking a laser turret on a sculpted chassis. “Apart from the reliability factor, which can easily become a nightmare, it is not nice to look at,” Ralf Herrtwich, Mercedes’s director of driver assistance and chassis systems, told me. “One of my designers said, ‘Ralf, if you ever suggest building such a thing on top of one of our cars, I’ll throw you out of this company.’ ”

Even if the new components could be made invisible, Herrtwich says, he worries about separating people from the driving process. The Google engineers like to compare driverless cars to airplanes on autopilot, but pilots are trained to stay alert and take over in case the computer fails. Who will do the same for drivers? “This one-shot, winner-take-all approach, it’s perhaps not a wise thing to do,” Herrtwich says. Then again alert, fully engaged drivers are already becoming a thing of the past. More than half of all eighteen-to-twenty-four-year-olds admit to texting while driving, and more than eighty per cent drive while on the phone. Hands-free driving should seem like second nature to them: they’ve been doing it all along.

One afternoon, not long after the car show, I got an unsettling demonstration of this from engineers at Volvo. I was sitting behind the wheel of one of their S60 sedans in the parking lot of the company’s American headquarters in Rockleigh, New Jersey. About a hundred yards ahead, they’d placed a life-size figure of a boy. He was wearing khaki pants and a white T-shirt and looked to be about six years old. My job was to try to run him over.

Volvo has less faith in drivers than most companies do. Since the seventies, it has kept a full-time forensics team on call at its Swedish headquarters, in Gothenburg. Whenever a Volvo gets into an accident within a sixty-mile radius, the team races to the scene with local police to assess the wreckage and injuries. Four decades of such research have given Volvo engineers a visceral sense of all that can go wrong in a car, and a database of more than forty thousand accidents to draw on for their designs. As a result, the chances of getting hurt in a Volvo have dropped from more than ten per cent to less than three per cent over the life of a car. The company says this is just a start. “Our vision is that no one is killed or injured in a Volvo by 2020,” it declared three years ago. “Ultimately, that means designing cars that do not crash.”

Most accidents are caused by what Volvo calls the four D’s: distraction, drowsiness, drunkenness, and driver error. The company’s newest safety systems try to address each of these. To keep the driver alert, they use cameras, lasers, and radar to monitor the car’s progress. If the car crosses a lane line without a signal from the blinker, a chime sounds. If a pattern emerges, the dashboard flashes a steaming coffee cup and the words “Time for a break.” To instill better habits, the car rates the driver’s attentiveness as it goes, with bars like those on a cell phone. (Mercedes goes a step further: its advanced cruise control won’t work unless at least one of the driver’s hands is on the wheel.) In Europe, some Volvos even come with Breathalyzer systems, to discourage drunken driving. When all else fails, the cars take preëmptive action: tightening the seat belts, charging the brakes for maximum traction, and, at the last moment, stopping the car.

This was the system that I was putting to the test in the parking lot. Adam Kopstein, the manager of Volvo’s automotive safety and compliance office, was a man of crisp statistics and nearly Scandinavian scruples. So it was a little unnerving to hear him urge me to go faster. I’d spent the first fifteen minutes trying to crash into an inflatable car, keeping to a sedate twenty miles an hour. Three-quarters of all accidents occur at this speed, and the Volvo handled it with ease. But Kopstein was looking for a sterner challenge. “Go ahead and hit the gas,” he said. “You’re not going to hurt anyone.”

I did as instructed. The boy was just a mannequin, after all, stuffed with reflective material to simulate the water in a human body. First a camera behind the windshield would identify him as a pedestrian. Then radar from behind the grille would bounce off his reflective innards and deduce the distance to impact. “Some people scream,” Kopstein said. “Others just can’t do it. It’s so unnatural.” As the car sped up—fifteen, twenty, thirty-five miles an hour—the warning chime sounded, but I kept my foot off the brake. Then, suddenly, the car ground to a halt, juddering toward the boy with a final double lurch. It came to a stop with about five inches to spare.

Since 2010, Volvos equipped with a safety system have had twenty-seven per cent fewer property-damage claims than those without it, according to a study by the Insurance Institute for Highway Safety. The system goes out of its way to leave the driver in charge, braking only in extreme circumstances and ceding control at the tap of a pedal or a turn of the wheel. Still, the car sometimes gets confused. Later that afternoon, I took the Volvo out for a test drive on the Palisades Parkway. I contented myself with steering, while the car took care of braking and acceleration. Like Levandowski’s Lexus, it quickly earned my trust: keeping pace with highway traffic, braking smoothly at lights. Then something strange happened. I’d circled back to the Volvo headquarters and was about to turn into the parking lot when the car suddenly surged forward, accelerating into the curve.

The incident lasted only a moment—when I hit the brakes, the system disengaged—but it was a little alarming. Kopstein later guessed that the car thought it was still on the highway, in cruise control. For most of the drive, I’d been following Kopstein’s Volvo, but when that car turned into the parking lot, my car saw a clear road ahead. That’s when it sped up, toward what it thought was the speed limit: fifty miles an hour.

To some drivers, this may sound worse than the four D’s. Distraction and drowsiness we can control, but a peculiar horror attaches to the thought of death by computer. The screen freezes or power fails; the sensors jam or misread a sign; the car squeals to a stop on the highway or plows headlong into oncoming traffic. “We’re all fairly tolerant of cell phones and laptops not working,” GM’s John Capp told me. “But you’re not relying on your cell phone or laptop to keep you alive.”

Toyota got a taste of such calamities in 2009, when some drivers began to complain that their cars would accelerate of their own accord—sometimes up to a hundred miles an hour. The news caused panic among Toyota owners: the cars were accused of causing thirty-nine deaths. But this proved to be largely fictional. A ten-month study by NASA and the National Highway Traffic Safety Administration found that most of the incidents were caused by driver error or roving floor mats, and only a few by sticky gas pedals. By then, Toyota had recalled some ten million cars and paid more than a billion dollars in legal settlements. “Frankly, that was an indicator that we need to go slow,” Jim Pisz told me. “Deliberately slow.”

An automated highway could also be a prime target for cyberterrorism. Last year, DARPA funded a pair of well-known hackers, Charlie Miller and Chris Valasek, to see how vulnerable existing cars might be. In August, Miller presented some of their findings at the annual Defcon hackers conference in Las Vegas. By sending commands from their laptop, they’d been able to make a Toyota Prius blast its horn, jerk the wheel from the driver’s hands, and brake violently at eighty miles an hour. True, Miller and Valasek had to use a cable to patch into the car’s maintenance port. But a team at the University of California, San Diego, led by the computer scientist Stefan Savage, has shown that similar instructions could be sent wirelessly, through systems as innocuous as a Bluetooth receiver. “Existing technology is not as robust as we think it is,” Levandowski told me.

Google claims to have answers to all these threats. Its engineers know that a driverless car will have to be nearly perfect to be allowed on the road. “You have to get to what the industry calls the ‘six sigma’ level—three defects per million,” Ken Goldberg, the industrial engineer at Berkeley, told me. “Ninety-five per cent just isn’t good enough.” Aside from its test drives and simulations, Google has encircled its software with firewalls, backup systems, and redundant power supplies. Its diagnostic programs run thousands of internal checks per second, searching for system errors and anomalies, monitoring its engine and brakes, and continually recalculating its route and lane position. Computers, unlike people, never tire of self-assessment. “We want it to fail gracefully,” Dolgov told me. “When it shuts down, we want it to do something reasonable, like slow down and go on the shoulder and turn on the blinkers.”

Still, sooner or later, a driverless car will kill someone. A circuit will fail, a firewall collapse, and that one defect in three hundred thousand will send a car plunging across a lane or into a tree. “There will be crashes and lawsuits,” Dean Pomerleau said. “And because the car companies have deep pockets they will be targets, regardless of whether they’re at fault or not. It doesn’t take many fifty- or hundred-million-dollar jury decisions to put a big damper on this technology.” Even an invention as benign as the air bag took decades to make it into American cars, Pomerleau points out. “I used to say that autonomous vehicles are fifteen or twenty years out. That was twenty years ago. We still don’t have them, and I still think they’re ten years out.”

If driverless cars were once held back by their technology, then by ideas, the limiting factor now is the law. Strictly speaking, the Google car is already legal: drivers must have licenses; no one said anything about computers. But the company knows that won’t hold up in court. It wants the cars to be regulated just like human drivers. For the past two years, Levandowski has spent a good deal of his time flying around the country lobbying legislatures to support the technology. First Nevada, then Florida, California, and the District of Columbia have legalized driverless cars, provided that they’re safe and fully insured. But other states have approached the issue more skeptically. The bills proposed by Michigan and Wisconsin, for instance, both treat driverless cars as experimental technology, legal only within narrow limits.

Much remains to be defined. How should the cars be tested? What’s their proper speed and spacing? How much warning do drivers need before taking the wheel? Who’s responsible when things go wrong? Google wants to leave the specifics to motor-vehicle departments and insurers. (Since premiums are based on statistical risk, they should go down for driverless cars.) But the car companies argue that this leaves them too vulnerable. “Their original position was ‘We shouldn’t rush this. It’s not ready for prime time. It shouldn’t be legalized,’ ” Alex Padilla, the state senator who sponsored the California bill, told me. But their real goal, he believes, was just to buy time to catch up. “It became clear to me that the interest here was a race to the market. And everybody’s in the race.” The question is how fast should they go.

At the tech meeting I attended, Levandowski showed the team a video of Google’s newest laser, slated to be installed within the year. It had more than twice the range of previous models—eleven hundred feet instead of two hundred and sixty—and thirty times the resolution. At three hundred feet, it could spot a metal plate less than two inches thick. The laser would be about the size of a coffee mug, he told me, and cost around ten thousand dollars—seventy thousand less than the current model.

“Cost is the least of my worries,” Sergey Brin had told me earlier. “Driving the price of technology down is like”—he snapped his fingers. “You just wait a month. It’s not fundamentally expensive.” Brin and his engineers are motivated by more personal concerns: Brin’s parents are in their late seventies and starting to get unsteady behind the wheel. Thrun lost his best friend to a car accident, and Urmson has children just a few years shy of driving age. Like everyone else at Google, they know the statistics: worldwide, car accidents kill 1.24 million people a year, and injure another fifty million.

For Levandowski, the stakes first became clear three years ago. His fiancée, Stefanie Olsen, was nine months pregnant at the time. One afternoon, she had just crossed the Golden Gate Bridge on her way to visit a friend in Marin County when the car ahead of her abruptly stopped. Olsen slammed on her brakes and skidded to a halt, but the driver behind her wasn’t so quick. He collided into her Prius at more than thirty miles an hour, pile-driving it into the car ahead. “It was like a tin can,” Olsen told me. “The car was totalled and I was accordioned in there.” Thanks to her seat belt, she escaped unharmed, as did her baby. But when Alex was born he had a small patch of white hair on the back of his head.

“That accident never should have happened,” Levandowski told me. If the car behind Olsen had been self-driving, it would have seen the obstruction three cars ahead. It would have calculated the distance to impact, scanned the neighboring lanes, realized it was boxed in, and hit the brakes, all within a tenth of a second. The Google car drives more defensively than people do: it tailgates five times less, rarely coming within two seconds of the car ahead. Under the circumstances, Levandowski says, our fear of driverless cars is increasingly irrational. “Once you make the car better than the driver, it’s almost irresponsible to have him there,” he says. “Every year that we delay this, more people die.”

After a long day in Mountain View, the drive home to Berkeley can be a challenge. Levandowski’s mind, accustomed to pinwheeling in half a dozen directions, can have trouble focussing on the two-ton hunks of metal hurtling around him. “People should be happy when I’m on automatic mode,” he told me, as we headed home one night. He leaned back in his seat and put his hands behind his head, as if taking in the seaside sun. He looked like the vintage illustrations of driverless cars on his laptop: “Highways made safe by electricity!”

The reality was so close that he could envision each step: The first cars coming to market in five to ten years. Their numbers few at first—strange beasts on a new continent—relying on sensors to get the lay of the land, mapping territory street by street. Then spreading, multiplying, sharing maps and road conditions, accident alerts and traffic updates; moving in packs, drafting off one another to save fuel, dropping off passengers and picking them up, just as Brin had imagined. For once it didn’t seem like a fantasy. “If you look at my track record, I usually do something for two years and then I want to leave,” Levandowski said. “I’m a first-mile kind of guy—the guy who rushes the beach at Normandy, then lets other people fortify it. But I want to see this through. What we’ve done so far is cool; it’s scientifically interesting; but it hasn’t changed people’s lives.”

When we arrived at his house, his family was waiting. “I’m a bull!” his three-year-old, Alex, roared as he ran up to greet us. We acted suitably impressed, then wondered why a bull would have long whiskers and a red nose. “He was a kitten a little while ago,” his mother whispered. A former freelance reporter for the Times and CNET, Olsen was writing a techno-thriller set in Silicon Valley. She worked from home now, and had been cautious about driving since the accident. Still, two weeks earlier, Levandowski had taken her and Alex on their first ride in the Google car. She was a little nervous at first, she admitted, but Alex had wondered what all the fuss was about. “He thinks everything’s a robot,” Levandowski said.

While Olsen set the table, Levandowski gave me a brief tour of their place: an Arts and Crafts house from 1909, once home to a hippie commune led by Tom Hayden. “You can still see the burn marks on the living-room floor,” he said. For a registered Republican and a millionaire many times over, it was a quirky, modest choice. Levandowski probably could have afforded that stateroom in a 747 by now, and made good use of it. Last year alone, he flew more than a hundred thousand miles in his lobbying efforts. There was just one problem, he said. It was irrational, he knew. It went against all good sense and a raft of statistics, but he couldn’t help it. He was afraid of flying.

Source: http://www.newyorker.com/reporting/2013/11/25/131125fa_fact_bilger?currentPage=all

Doch gilt dies heute noch? In den letzten Jahren sind mit dem Übergang von Steve Jobs zu Tim Cook die Unkenrufe immer lauter geworden. Hat Apple seine besten Zeiten schon hinter sich? Steigt das Unternehmen ins Mobile Payment ein? Mit welchen Angeboten, in welcher Rolle? Holen sie vielleicht zum großen Rundumschlag aus? Oder ist der Zug für sie vielleicht schon abgefahren, weil sie bislang kein NFC (Near Field Communication) integriert haben? Oder läutet gar wegen der „Android-Strategie“ eines offenen Öko-Systems die Todesglocke für das Unternehmen?

Doch gilt dies heute noch? In den letzten Jahren sind mit dem Übergang von Steve Jobs zu Tim Cook die Unkenrufe immer lauter geworden. Hat Apple seine besten Zeiten schon hinter sich? Steigt das Unternehmen ins Mobile Payment ein? Mit welchen Angeboten, in welcher Rolle? Holen sie vielleicht zum großen Rundumschlag aus? Oder ist der Zug für sie vielleicht schon abgefahren, weil sie bislang kein NFC (Near Field Communication) integriert haben? Oder läutet gar wegen der „Android-Strategie“ eines offenen Öko-Systems die Todesglocke für das Unternehmen?